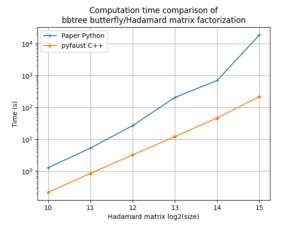

FAµST 3.25.3 version is an optimization update of the balanced tree

butterfly factorization C++ implementation (API links: matfaust, pyfaust).

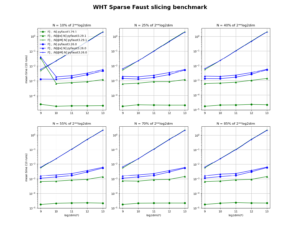

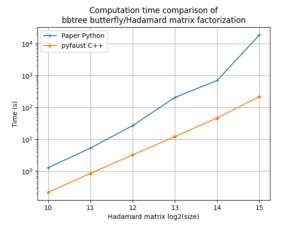

As the following figure shows, the FAµST implementation is faster than the paper reference [1] python scripts [2] by about an order of magnitude for large dimensions of the Hadamard matrix.

The gap is due to the code parallelization (which is possible because of the bbtree structure of the factorization) and use of CSR sparse matrices for the supports and factors (instead of dense matrices).

Note: the computer used to produce the figure data provides 1450Gio of RAM, and 64 cores (Intel(R) Xeon(R) Gold 5218 CPU @ 2.30GHz).

Brief examples of how to use the pyfaust/matfaust butterfly functions are available on these pages: matfaust, pyfaust.

[1] Quoc-Tung Le, Léon Zheng, Elisa Riccietti, Rémi Gribonval. Fast learning of fast transforms, with guarantees. ICASSP, IEEE International Conference on Acoustics, Speech and Signal Processing, May 2022, Singapore, Singapore. ⟨hal-03438881⟩

[2] Léon Zheng, Quoc-Tung Le, Elisa Riccietti, Rémi Gribonval. Code for reproducible research – Fast learning of fast transforms, with guarantees. 2022, ⟨swh:1:dir:42d42bf905c9f3bbcd6cf5e1e0cda6be2d0d63de;origin=https://hal.archives-ouvertes.fr/hal-03552956;visit=swh:1:snp:6a2256426a0f3a7491086fbf2da554d7589d1e18;anchor=swh:1:rel:f477382c82ada89647864605ea44b12383c04167;path=/⟩. ⟨hal-03552956⟩